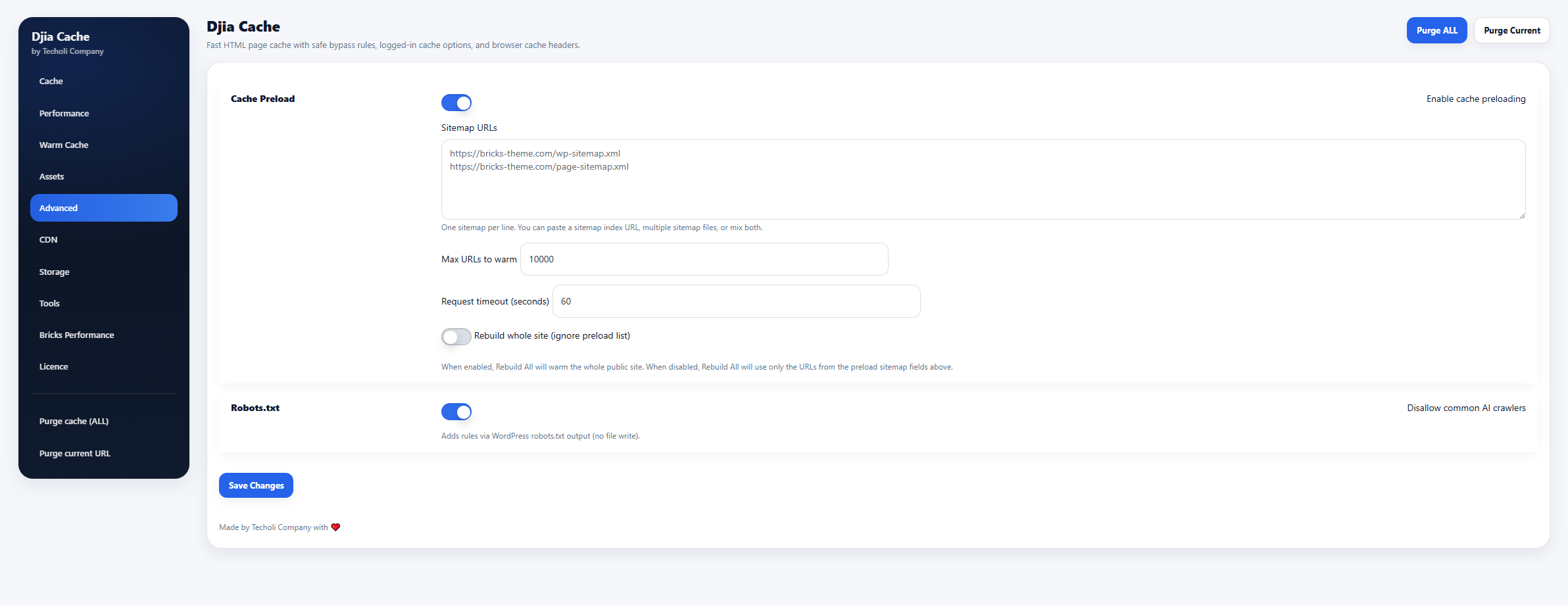

DJIA Cache – Advanced Tab

The Advanced tab contains additional tools for cache preloading and robots.txt management. These options provide more control over how cache is generated and how search engines or automated crawlers interact with the site.

Sections included in this tab:

- Cache Preload

- Robots.txt

Cache Preload

Cache preloading allows DJIA Cache to generate cached pages in advance instead of waiting for visitors to open them.

This helps ensure pages are already cached when users arrive, improving performance and reducing server load.

Enable cache preloading

Enables manual cache preloading using URLs from a sitemap.

When enabled, DJIA Cache can fetch pages in the background and generate cached versions before visitors request them.

Benefits:

- faster first visit performance

- warm cache after cache purge

- better SEO crawling performance

Sitemap URL

Defines the sitemap used to extract URLs for cache warming.

Example:

https://example.com/sitemap.xml

DJIA Cache reads the sitemap and sequentially requests each URL to generate cached versions.

You can use:

- WordPress native sitemaps

- SEO plugin sitemaps

- custom sitemap files

Max URLs to warm

Limits the number of URLs processed in a single preload run.

Example:

50

This prevents the preload process from consuming too many server resources at once.

If the sitemap contains more URLs, they will be processed in later runs.

Request timeout (seconds)

Defines the maximum time allowed for each warmed request.

Example:

5

If a page takes longer than the timeout value to respond, the request will be skipped.

This prevents the preload process from getting stuck on slow pages.

Robots.txt

This section controls additional robots.txt rules generated by DJIA Cache.

Rules are injected dynamically using WordPress’ robots.txt output and do not modify the actual robots.txt file on disk.

Disallow common AI crawlers

Adds disallow rules for known AI data collection crawlers.

These crawlers are often used for training large language models and can generate heavy server load.

When enabled, DJIA Cache adds rules to block common AI crawlers such as:

- GPTBot

- Google-Extended

- CCBot

- ClaudeBot

- PerplexityBot

- Bytespider

Example rules generated:

User-agent: GPTBot

Disallow: /User-agent: CCBot

Disallow: /

Benefits:

- reduces unnecessary crawling

- protects server resources

- prevents automated data scraping